Abstract

Common approaches to problems involving multiple modalities (classification, retrieval, hyperlinking, etc.) are early fusion of the initial modalities and crossmodal translation from one modality to the other. Recently, deep neural networks, especially deep autoencoders, have proven promising both for crossmodal translation and for early fusion via multimodal embedding. In this work, we propose a flexible crossmodal deep neural network architecture for multimodal and crossmodal representation. By tying the weights of two deep neural networks, symmetry is enforced in central hidden layers thus yielding a multimodal representation space common to the two original representation spaces. The proposed architecture is evaluated in multimodal query expansion and multimodal retrieval tasks within the context of video hyperlinking. Our method demonstrates improved crossmodal translation capabilities and produces a multimodal embedding that significantly outperforms multimodal embeddings obtained by deep autoencoders, resulting in an absolute increase of 14.14 in precision at 10 on a video hyperlinking task (alpha=10-4).

Overview

At Linkmedia, as the name suggests, we work with different modalities and find ways to link them into coherent wholes. When I first started working with multimodal data, I normally started by fusing scores separately and progressing to multimodal autoencoders. However, improvements were often marginal and we soon started analyzing possible alternative ways of performing multimodal fusion.

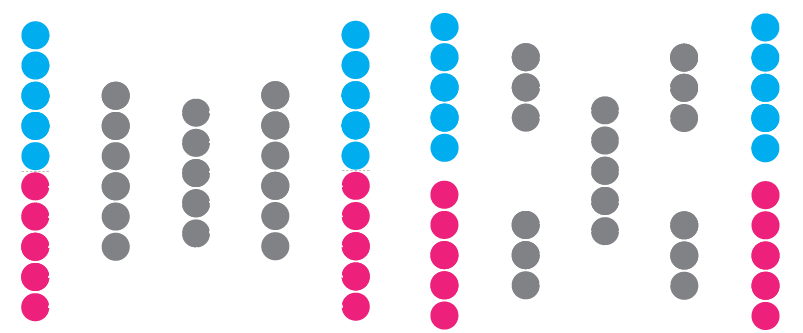

Multimodal fusion of disjoint single-modal continuous representations is typically performed by multimodal autoencoders. Two types of autoencoders are often used: i) a simple autoencoder (illustrated below, on the left) that is typically used for dimensionality reduction of a single modality but is here presented with both modalities concatenated at its input and output and ii) a multimodal autoencoder containing additional separate branches for each modality (illustrated on the right). With all autoencoders, noise is often added to the inputs in order to make them more robust. In the case of multimodal autoencoders, one modality is sporadically zeroed at input while they're expected to reconstruct both modalities at their output.

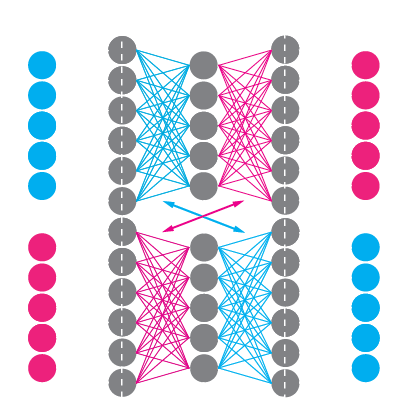

Our approach to multimodal fusion is crossmodal. Instead of reconstructing two modalities with a specialized multimodal autoencoder, we train two crossmodal translations with two separate neural networks, one to translate from e.g. speech to visual representations and the other to translate in the opposite direction, e.g. from visual to speech representations. We call this architecture "Bidirectional (symmetrical) Deep Neural Network" or BiDNN for short. The "symmetrical" part in the title does not come solely from the symmetry of the architectures of the two networks but also from an enforced symmetry in the weights of the central layers of the two networks. While training, we add an additional restriction saying that the weights of one network should be the same as the weights of the other network transposed. This enforces the two crossmodal translations to be more symmetric and have a common representation space in the middle where both modalities can be projected and compared. The architecture looks as illustrated:

In terms of equations, each network looks quite simple and stand-alone, except that for the 2nd and 3rd layers, the weight matrices W are shared between the two networks:

We performed the initial analysis with MediaEval's 2014 video hyperlinking dataset (formed post-evaluation) and used averaged Word2Vec for speech transctripts and visual concepts (provided as part of the challenge by KU Leuven). We compare simple score fusion (linear combination of scores), classical multimodal autoencoders and our new approach, BiDNNs:

| Method | Precision @ 10 (%) | Std. dev. (%) |

|---|---|---|

| Baseline | ||

| Transcripts | 58.67 | - |

| Visual concepts | 50.00 | - |

| Linear combination | 61.32 | 3.1 |

| Multimodal fusion | ||

| AE #1 (illustrated on the left) | 57.40 | 1.24 |

| AE #2 (illustrated on the right) | 59.60 | 0.65 |

| BiDNN | 73.74 | 0.82 |

By fusing this visual information (that yields 50.00% by itself) and speech information (that yields 58.67% by itself) in a crossmodal fashion, we're able to achieve 73.74% in terms of precision at 10. This was later improved, with better initial single modal representations that allowed us to achieve 80% in terms of precision at 10. We also used this method at TRECVID's 2016 video hyperlinking challenge where we obtained the best score and defined the new state of the art.

As a conclusion, I'd like to say: To improve on single modal retrieval, go multimodal. To improve on multimodal retrieval, go crossmodal.